- #Download spark 2.10. bin hadoop2.7 tgz line command how to

- #Download spark 2.10. bin hadoop2.7 tgz line command driver

- #Download spark 2.10. bin hadoop2.7 tgz line command full

- #Download spark 2.10. bin hadoop2.7 tgz line command software

We ran both the Master and Slave daemons on the same node. In addition, this release focuses more on usability, stability, and polish, resolving over 1200 tickets. This release makes significant strides in the production readiness of Structured Streaming, with added support for event time watermarks and Kafka 0.10 support.

**Conclusion **: We covered the basics of setting up Apache Spark on an AWS EC2 instance. Apache Spark 2.1.0 is the second release on the 2.x line. You’ll see that Zookeeper elected Master 2 as the primary master :įrom the Spark UI of Master 2, you’ll see that all slave nodes are now attached : If you want to visualize what’s going on : When you’ll stop Master 1, the Master 2 will be elected as the new Master and all Worker nodes will be attached to the newly elected master. What if you shutdown Master 1? Zookeeper will handle the selection of a new Master!

All Worker nodes will be attached to the Master 1 : Once all slave nodes are running, reload your master browser page. tar.gz file by executing the command bellow :

#Download spark 2.10. bin hadoop2.7 tgz line command software

On each node, extract the software and remove the. Make sure to repeat this step for every node.

#Download spark 2.10. bin hadoop2.7 tgz line command how to

On each node, execute the following command : Apache Spark Installation in Standalone Mode- how to download Apache Spark, install & configure Spark on Ubuntu. If you want to choose the version 2.4.0, you need to be careful! Some software (like Apache Zeppelin) don’t match this version yet (End of 2018).įrom Apache Spark’s website, download the tgz file : If you don’t remenber how to do that, you can check the last section ofįor the sake of stability, I chose to install version 2.3.2. Make sure an SSH connection is established. Connect via SSH on every node except the node named Zookeeper : Java should be pre-installed on the machines on which we have to run Spark job. Standalone mode is good to go for developing applications in Spark.

#Download spark 2.10. bin hadoop2.7 tgz line command driver

Both driver and worker nodes run on the same machine. This is the simplest way to deploy Spark on a private cluster. Along with that, it can be configured in standalone mode.įor this tutorial, I choose to deploy Spark in Standalone Mode. Spark can be configured with multiple cluster managers like YARN, Mesos, etc.

#Download spark 2.10. bin hadoop2.7 tgz line command full

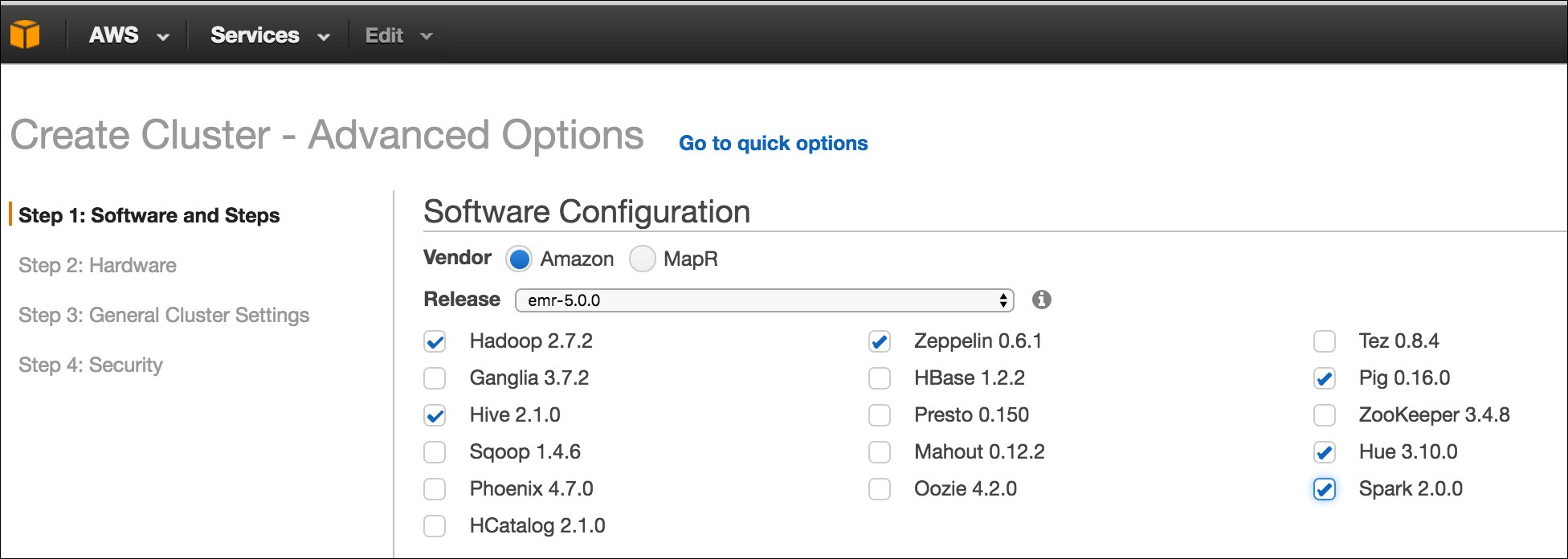

The goal of this final tutorial is to configure Apache-Spark on your instances and make them communicate with your Apache-Cassandra Cluster with full resilience. Launch your Master and your Slave nodes.You need to build Spark before running this program. Add dependencies to connect Spark and Cassandra 'Spark\spark-2.0.0-bin-hadoop2.7\bin.\jars''\ is not recognized as an internal or external command,operable program or batch file.This tutorial will be divided into 5 sections. The “election” of the primary master is handled by Zookeeper. We’ll go through a standard configuration which allows the elected Master to spread its jobs on Worker nodes. This topic will help you install Apache-Spark on your AWS EC2 cluster. Add dependencies to connect Spark and Cassandra

This release adds Barrier Execution Mode for better integration with deep learning frameworks, introduces 30+ built-in and higher-order functions to deal with complex data type easier, improves the K8s integration, along with experimental Scala 2.12 support. 실시간 빅데이터 분석 개요 빅데이터 : 시스템, 서비스, 조직 등에서 주어진 비용, 시간 내에 처리 가능한 범위를 넘어서는 데이터빅데이터 4V : Volume(10 TB 이상), Velocity(Batch, Near time, Real time, Streams), Variety(Structured, Unstructured, Semi-structured) Value Hadoop 아파치 프로젝트 중 DB쪽에 위치하며, 인프라쪽으로 가고 있다The Apache™ Hadoop® project develops open-source software for reliable, scalable, distributed computing.The Apache Hadoop s. Apache Spark 2.4.0 is the fifth release in the 2.x line.URLs //Spark //Hadoop //Hadoop Applications //Hadoop Jobs //Zepplin //Spark Down //Spark Docu /docs/latest/index.html //flume repository //cafe.conf/ -f conf/flume_avro.txt =DEBUG,console -n a1 Hadoop/bin/hadoop fs -cat /rlt01/part-00000īin/flume-ng agent -conf. Ssh hadoop02 "tar -zxf spark-1.4.0-bin-hadoop2.4.tgz"Ĭat input/NASDAQ_daily_prices_A.csv | head -5 Ssh hadoop02 "ln -s spark-1.4.0-bin-hadoop2.4 spark" Tar -zxf zeppelin-0.5.6-incubating-bin-all.gz Ln -s zeppelin-0.5.6-incubating-bin-all zeppelinĬp Downloads/hadoop_cnf/* hadoop/etc/hadoop/